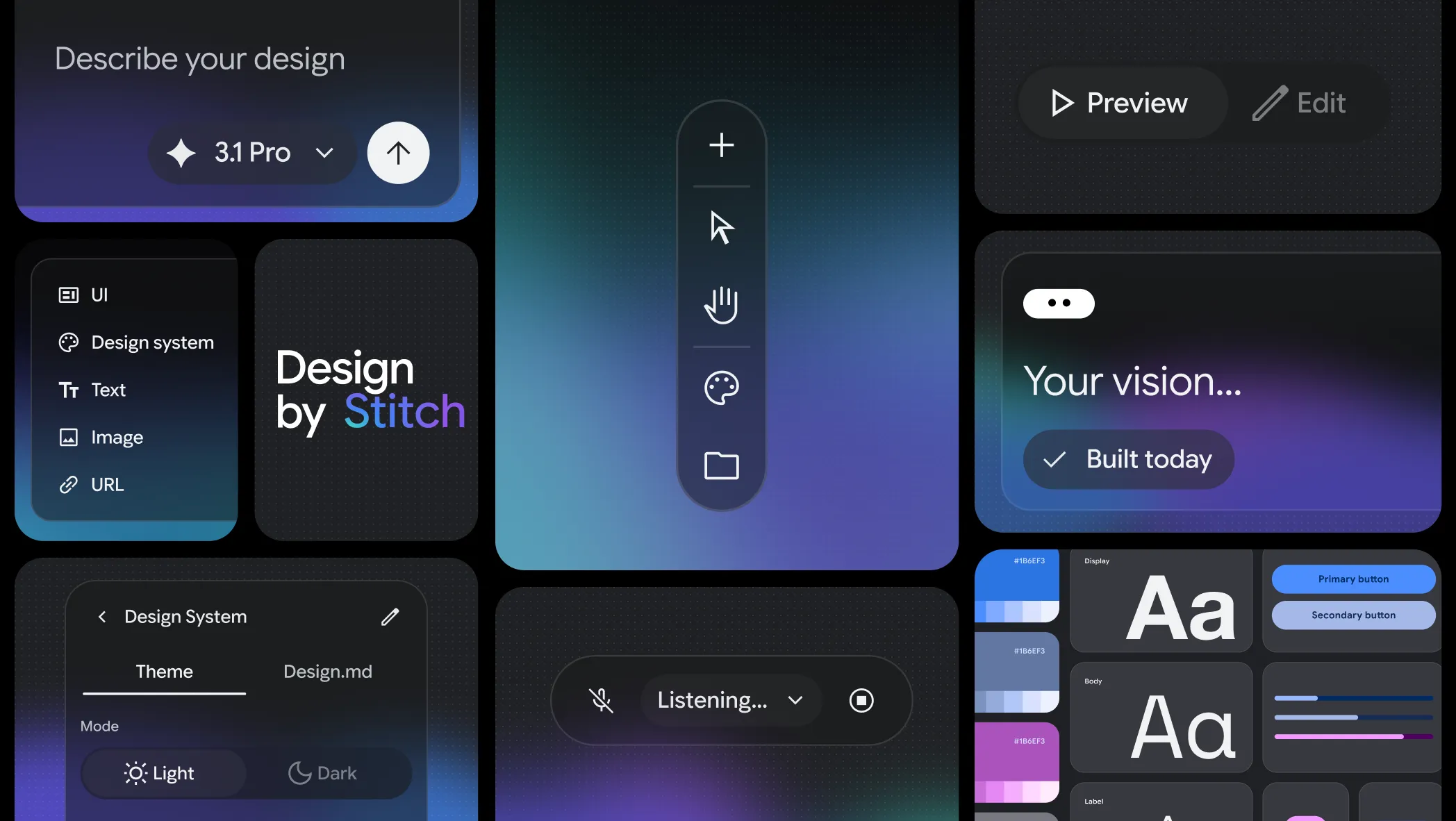

Design UI using AI with Stitch from Google Labs

Stitch is evolving into an AI-native software design canvas that allows anyone to create, iterate and collaborate on high-fidelity UI from natural language.

Stitch is an AI-native software design canvas from Google Labs where you describe what you want in natural language and it generates high-fidelity UI designs. blog It's "vibe design" — the design-side counterpart to vibe coding.

A few things that stand out:

DESIGN.md is a clever concept — it's an agent-friendly markdown file that lets you export or import design rules to and from other design and coding tools. blog So your design system becomes a portable, LLM-readable spec. That connects directly to the CodeSpeak philosophy of specs-as-source-of-truth, but applied to the visual layer.

The MCP integration is the most relevant part to what we've been discussing — Stitch has an MCP server and SDK so you can leverage its capabilities via skills and tools, and export designs to developer tools like AI Studio. blog So it's not a closed system. Your AI agent workflow could theoretically call Stitch as a tool to generate UI.

The interactive prototype flow is slick — you can stitch screens together and click "Play" to preview an interactive app flow, and it can automatically generate logical next screens based on user actions. blog

Google just changed the future of UI/UX design... - YouTube

No comments:

Post a Comment