21 tips to stop hitting Claude's limit: Post | LinkedInPost | LinkedIn

Sivasankar Natarajan | LinkedIn

1. Convert files before uploading

• Don't upload PDFs, screenshots, or PPTs. Copy the text into a Google Doc, download as .md, then upload.

2. Plan in Chat, build in Cowork

• Plan structure in Chat first. Then move to Cowork to build the actual file.

3. Ask questions

• Use clear prompts: "I want to [task] to [success criteria]." Ask Claude to "AskUserQuestion" before starting.

4. Stop redoing the whole thing

• If something is wrong, only redo the broken section. Add "no commentary, just the output."

5. Edit your original message

• If Claude responds poorly, click Edit on your original message, fix it, regenerate. Chat only.

6. Reuse the same prompt structure

• Build a library of prompts. Swap only the variables.

7. Batch tasks into one message

• Combine: "Summarize, list key points, suggest a headline" beats three separate prompts.

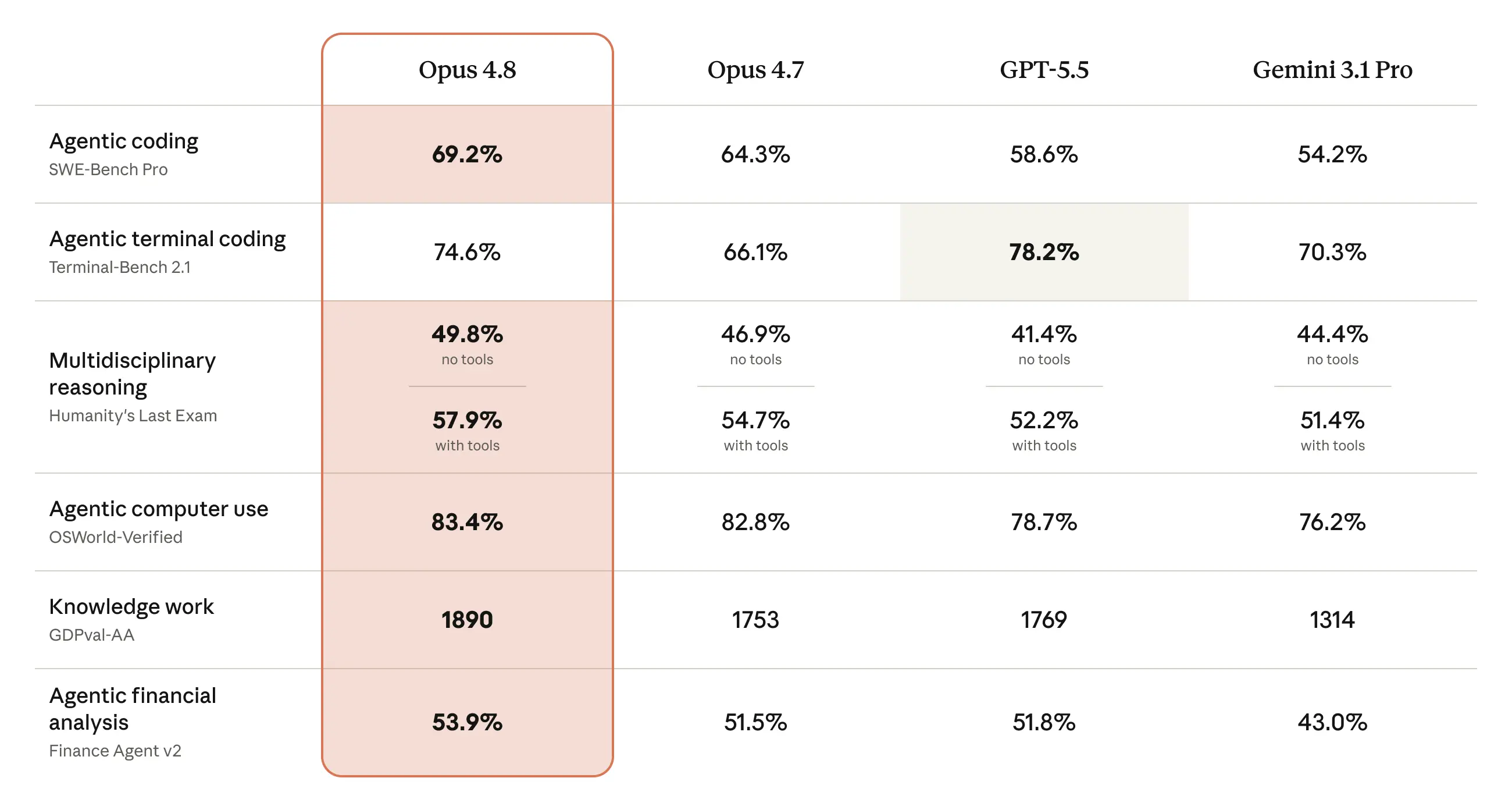

8. Pick the right model

• Sonnet or Haiku for grammar. Opus + Extended Thinking for real work.

9. Keep your files short

• Trim Cowork folders to under 2,000 words to avoid burning tokens.

10. Restart, don't follow up

• If something goes wrong in Cowork, click "Restart conversation from here".

11. Summarize every 15–20 messages

• Ask Claude to summarize key points. Copy the summary, start a new session, paste it as message one.

12. Don't dump your whole folder

• Only include files Claude needs for the specific task.

13. Use Projects for recurring files

• Stop uploading the same PDF every time. Upload once into a Project.

14. New topic = new chat

• Shifting topics? Start a new chat. Don't drag old context into unrelated work.

15. Turn off features you don't need

• Disable extra web search, connectors, and Explore modes you are not using.

16. Schedule recurring tasks

• Use the /schedule plugin to automate weekly digests.

17. Stop using Claude for things it can't do

• No image generation. No real-time search. Use Grok or another tool for those.

18. Speak your prompts for more context

• Use tools like Wispr to dictate prompts. More context, less reloading.

19. Set up preferences

• Set your style and turn off memory if not needed.

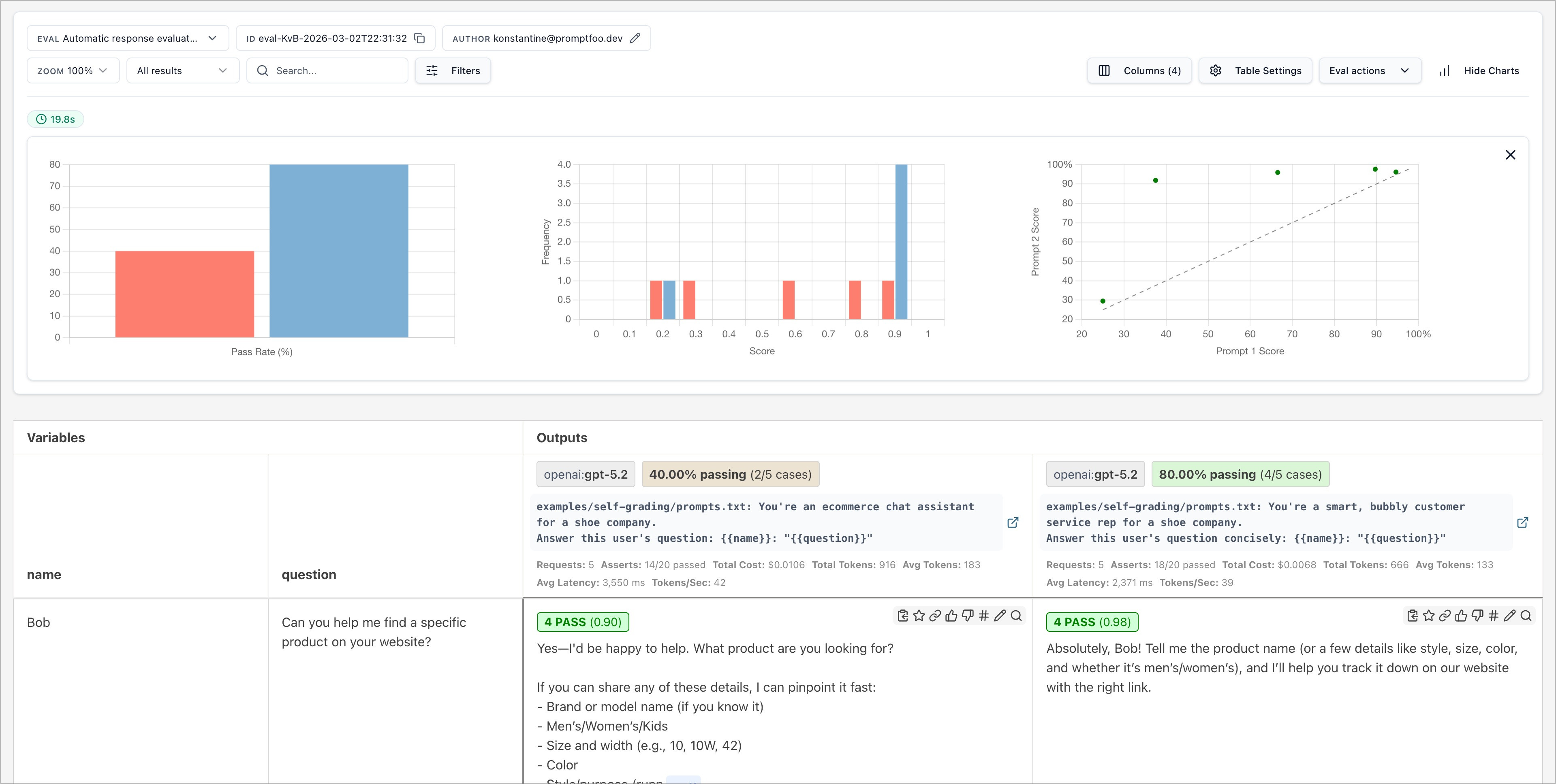

20. Prompt Claude Code tightly

• "Build a bar chart from this CSV. Save as chart.png." Tight instructions, fewer back-and-forths.

21. Spread across the day

• Claude works best in short sessions. Work in the morning, take a break, resume in the afternoon.

The takeaway

Limits are a budget. Heavy users do not get more tokens. They use the ones they have better.

Tighter prompts, smaller files, the right model, and a habit of restarting.