Docker is a tool for managing OS containers.

Those containers used to be based on Linux only,

and now containers are available on Windows 10 and Windows Server 2016 also.

Containers for Linux and for Windows are not the same, since OS is different. Still, Linux containers can run on Windows by ruining a Linux VM in Hyper-V.

So one can use both Linux and Windows containers on Windows host, including older versions of Windows (7, 2012 etc).

Complicated? Wait, this is just a start. But in fact it is not too complicated, and it is useful.

Support for containers is based on virtualization technology included in OS:

Linux or Windows or even Solaris that was the first one to have them.

Essentially this is a set o system level APIs.

Docker

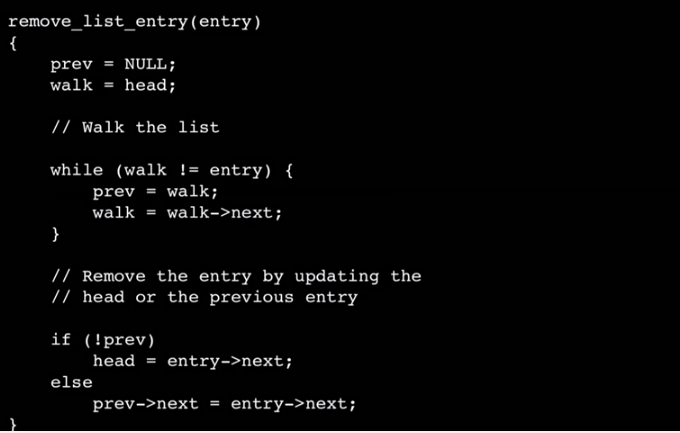

Docker is "just" a convenient tool that helps with managing OS containers. Apparently this was very important innovation, since APIs ware present in Linux kernel for years and not used much before Docker. In fact a variant of "containers" technology was included in Windows 8 also for Windows store apps, and enhanced in Windows 10 and Sever 2016.

Sun "invented" containers that made

Solaris very efficient in hosting many web sites.

Containers are created as "images" (zip files) that include a "difference" between "clean base OS"

and desired content of OS instance with additional files, and in case of Windows also Registry configurations etc. Those "instance" packages are usually much smaller that VM images since they only include files that are not present in the base OS.

A container image can be also "built" on top of another container image, almost like object oriented class inheritance or snapshots of virtual machines. This way it is easy to create updates and variations of containers. Very powerful and compact.

The key benefit of using containers is efficiency: running instances of an OS in a container is leveraging storage and memory of host OS, this way not duplicating resource usage, and can start in milliseconds, compared with seconds or even minutes required for full OS to start. A container instance is actually running as a standard process in a host OS, nothing too complicated.

Thanks to those special virtualization APIs in OS kernel such process can internally extend what resources are visible to applications running inside of container. That is the key "magic".

DevOps and Docker

Docker and Azure: Design, Deploy, and Scale

Docker and Azure: Design, Deploy, and Scale @SlideShare

So containers are efficient and convenient way to package application with required resources

and Docker is a very popular tool for managing them. But this is not all, it is just beginning!

Since containers are lightweight and convenient, they are perfect match for "cloud" applications,

i.e. utilizing "micro-services architectures" that can have a very large number of instances.

Docker is good for manual and interactive managing of a few containers in one host OS.

For managing hundreds or thousands of containers some more powerful and more complex tools are needed and available. After all, Docker is a startup business and they also need "exit strategy" for making some money. There comes

Docker Swarm, a commercial platform for managing containers in many VM hosts. There are some alternative solutions for same of similar purpose, like

Mesos DCOS,

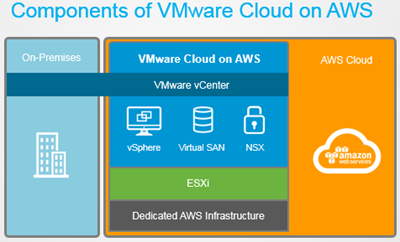

Kubernetes by Google etc. Microsoft Azure has its own Fabric platform, but it conveniently supports all major containers management tools, making it easy to deploy and configure required clusters of resources for creating and managing containers of various kinds.

Containers are efficient, but due to implementation security is relatively limited to process insulation available in host OS. So when implementing containers in Windows Server 2016, Microsoft decided to create not one, but two variants of containers: "Windows Containers" and "Hyper-V Containers". As name suggests, the first kind is standard OS based, and second one is based on Hyper-V virtual machines technology, with same security insulation of running container instance as VM, but slightly more overhead compared to Windows Containers.

Get started with Docker for Windows - Docker

PowerShell For Docker | MSDN

Windows Containers Quick Start

Getting Started with Windows Containers – Julien Corioland – Technical Evangelist @ Microsoft

Windows Containers FAQ

Azure Container Service | Microsoft Azure

Pricing - Container Service | Microsoft Azure

Azure Container Registry preview | Blog | Microsoft Azure

Docker is "just" a convenient tool that helps with managing OS containers. Apparently this was very important innovation, since APIs ware present in Linux kernel for years and not used much before Docker. In fact a variant of "containers" technology was included in Windows 8 also for Windows store apps, and enhanced in Windows 10 and Sever 2016.

Docker is "just" a convenient tool that helps with managing OS containers. Apparently this was very important innovation, since APIs ware present in Linux kernel for years and not used much before Docker. In fact a variant of "containers" technology was included in Windows 8 also for Windows store apps, and enhanced in Windows 10 and Sever 2016.